Probably you saw the note from Tim Hall and Julian Frey about the release of the new version of Enterprise Manager 24ai (24.1.0.0.0). It was released on the Oracle edelivery site, but the documentation is not out yet, documentation was released and can be accessed here. But I recommend registering at Oracle Enterprise Manager Technology Forum 2024 to check what it is the new version and what to expect when using the EM 24ai.

Category Archives: Upgrade

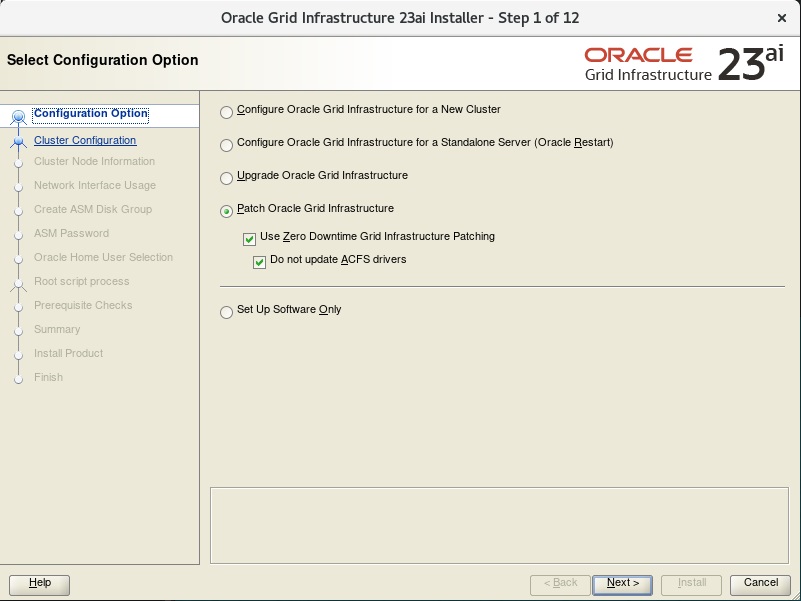

23ai, Zero-Downtime Oracle Grid Infrastructure Patching – GOLD IMAGE with Silent Install

My previous post was about the Zero-Downtime Oracle Grid Infrastructure Patching (ZDOGIP) for 23ai using the gold image. In that case, I used the GUI interface to do the installation and patch, but as you know, this is not good for the automation process. So, here in this post, I will describe how to do the same operation using the silent mode for the installation. I will show what parameters you need to set in the response file and all the other steps.

Important details

The focus of this post is to show how to do the same process as my previous post using the silent mode. I will not “prove” (like I made in the last one) that databases continue to receive inserts or details about the AFD/ACFS drivers not being updated. I really recommend that you read my previous post to understand all of these details. Here I will show how to do in silent mode what I made in the previous post.

23ai, Zero-Downtime Oracle Grid Infrastructure Patching – GOLD IMAGE

As you know, the 23ai was released for Cloud and Engineered Systems (Exadata and ExaCC) first, I already explored these in previous posts as well. And since the patches already started to be released, now with the patch for 23.6, we can re-test the feature Zero-Downtime Oracle Grid Infrastructure Patching (ZDOGIP). The steps here are not specific to the Exadata version and can be used for any 23ai version.

I already demonstrated how to use it for 21c (using graphical, and silent mode) and the same can be done for 19c as well.

But now, I will show how to do for 23ai, and this post includes:

- Install the Grid Infrastructure 23.6.0.24.10, using the Gold Image

- Upgrade the GI from 23.5.0.24.07 to 23.6.0.24.10 using the Zero-Downtime Oracle Grid Infrastructure Patching

This will be done while the database is running to show that we can patch the GI without downtime. I will show how to do this:

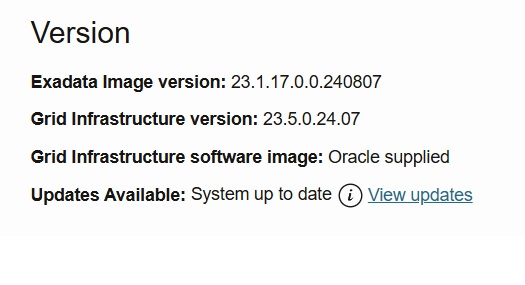

ExaCC, Upgrading from OEL 7 to OEL 8

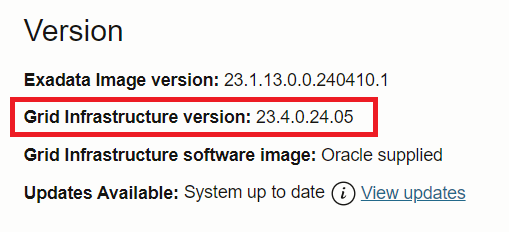

Recently I shared several posts about the process to upgrade the GI from 19c to 23ai at ExaCC. My last post summarizes a lot of this, please read it here. But as you know, to use the 23ai you need to be running with OEL 8, and for ExaCC, the upgrade is quite simple. The goal is to reach this, “no updates” and “System up to date”:

Manually upgrading Oracle GI from 19c to 23ai

With the official release of Oracle 23ai to Exadata on-prem, it is now possible to manually upgrade Grid Infrastructure (GI) from 19c to 23ai. Nowadays the process is simpler than it was in the past, and I already published several examples of how to do this:

- 23ai, Zero-Downtime Oracle Grid Infrastructure Patching using Release Update with Silent Install

- 23ai, Zero-Downtime Oracle Grid Infrastructure Patching – GOLD IMAGE with Silent Install

- 23ai, Zero-Downtime Oracle Grid Infrastructure Patching – GOLD IMAGE

- Adding the parameter to allow the installation of GI 23ai at On-Prem.

- Upgrading to GI 23ai at ExaCC using CLI

- Upgrading to GI 23ai at ExaCC

- 21c, Zero-Downtime Oracle Grid Infrastructure Patching – Silent Mode

- 21c, Zero-Downtime Oracle Grid Infrastructure Patching

- 21c Grid Infrastructure Upgrade

- 19c Grid Infrastructure Upgrade

- Reaching Exadata 18c (this includes upgrades of GI from 12.1 to 12.2, and also from 12.2 to 18c)

So, several examples that you can use as a guide to reach from GI 12.1 to 19c. In this post, I will upgrade from GI 19.23 (19.23.0.0.240416) to GI 23.5 (23.5.0.24.07).

Upgrading to GI 23ai at ExaCC using CLI

As you know, the 23ai is already available in several environments, mainly in Oracle Cloud, and one of these is the ExaCC. I already covered how to do the upgrade to 23ai for Grid Infrastructure (GI) using the Web interface, and Christian covered the upgrade of the OCI CLI. But here I will upgrade using the ExaCC CLI (dbaascli).

Again, first things first. Requirements

As discussed in the previous post, the first requirement is that your VM/domU is running the 23.1x version because it runs over the OEL 8. The second one is that the only available versions that are allowed to be installed in the cluster are the 19c and 23ai. The last one is that the attribute “compatible.rdbms” needs to be at least 19.0.0.0 for your diskgroups:

SQL> SELECT name, compatibility, database_compatibility FROM v$asm_diskgroup; NAME COMPATIBILITY DATABASE_COMPATIBILITY ------------------------------ ------------------------------------------------------------ ------------------------------------------------------------ DATAC5 19.0.0.0.0 11.2.0.4.0 RECOC5 19.0.0.0.0 11.2.0.4.0 SQL> SQL> ALTER DISKGROUP DATAC5 SET ATTRIBUTE 'compatible.rdbms' = '19.0.0.0.0'; Diskgroup altered. SQL> ALTER DISKGROUP RECOC5 SET ATTRIBUTE 'compatible.rdbms' = '19.0.0.0.0'; Diskgroup altered. SQL>

Upgrading to GI 23ai at ExaCC

The 23ai was released last month and was only available at Oracle Cloud deployments and a few places for free edition, nothing besides that. Last year it was also released (focused on the Devs) as a formerly 23c free edition. Fortunately, it was released to be used at ExaCC. So, now we can upgrade Grid Infrastructure (GI) and install the database to play with it.

In all previous scenarios, we had some constraints. For Dev’s we didn’t have RAC, DG, and GI features at all. And for OCI, we didn’t have access to manually create databases or deploy GI buy ourselves. For ExaCC we are free to deploy our GI, install RAC databases, and so on. Here I will show how to upgrade your GI from 19c to 23ai. We will reach this:

Exadata version 23.1.0.0.0 – Part 04

On 08/March/2023 the Oracle Exadata team released version 23.1.0.0.0 and this include a significant change, OEL 8. I already explained that in my first post that you can read here. In my previous posts, I already described how to patch how to patch storage and switch, and the dom0. In this post, I will discuss how to patch the domU.

What you can do

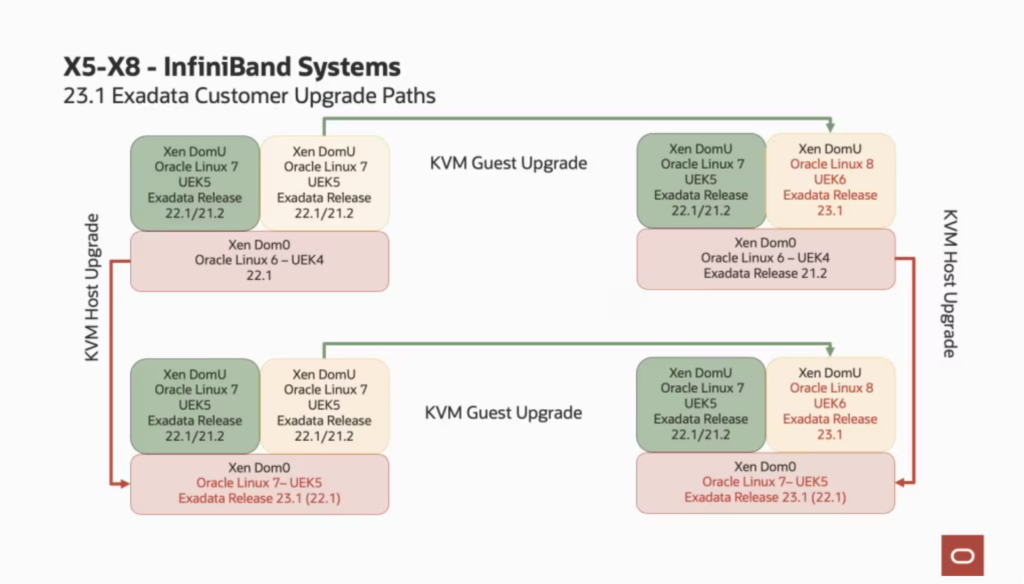

I already wrote this previously but is important to understand the upgrade paths that you can do: If you are running the old Exadata with InfiniBand, your dom0 will always be updated until Oracle Linux 7 with UEK5. For domU you can upgrade to the OEL 8. And you can upgrade in any order, first dom0 or domU. If you are running RoCE, your dom0 can run the latest OEL 8 UEK6. The blog post from Oracle made an excellent explanation about the upgrade paths and below you can see the images that are there (I used the image from their post).

Exadata version 23.1.0.0.0 – Part 03

On 08/March/2023 the Oracle Exadata team released version 23.1.0.0.0 and this include a significant change, OEL 8. I already explained that in my first post that you can read here. In my second post, I wrote about how to patch storage and switch. In this post, I will discuss how to patch the dom0.

What you can do

Due to the changes for OEL 8, is important to understand the upgrade paths that you can take. As I wrote in my first post: If you are running the old Exadata with InfiniBand, your dom0 will always be updated until Oracle Linux 7 with UEK5. For domU you can upgrade to the OEL 8. And you can upgrade in any order, first dom0 or domU. If you are running RoCE, your dom0 can run the latest OEL 8 UEK6. The blog post from Oracle made an excellent explanation about the upgrade paths and below you can see the images that are there (I used the image from their post).

So, since the environment that I am patching is Exadata with InfiniBand, my dom0 will be upgraded until the OEL7 running the UEK5. But the Exadata-related software will be upgraded to version 23.1. The domU will be upgraded to OEL8, with UEK6. So, basically will be this (I used the image from the Exadata Team post):

Here, I patched first the dom0 because if I patch it first, all the versions already released for domU will be compatible with him. I am upgrading, so, the dom0 running at 23.1 will be compatible with domU running at a lower version.

Exadata version 23.1.0.0.0 – Part 02

On 08/March/2023 the Oracle Exadata team released version 23.1.0.0.0 and this include a significant change, OEL 8. I already explained that in my first post that you can read here. Here I will show how to patch to the 23.1.0.0 version for switch and storage cells.

Patching

As you know, I am working with Exadata since 2010 and have already posted about how to upgrade to the 19x version, 18.x version, version 12.x (Portuguese only), and many other details for Oracle Engineered Systems. Fortunately, I had the opportunity to apply the 23.1 version over one environment and will show the details.

Here, my environment is:

- Exadata X6 for storage and dbnodes.

- InfiniBand Switches.

- Virtualized configuration (dom0 and domU).

- dom0 running over version 22.1.0.9.

- domU running over version 22.1.0.9.

- Grid Infrastructure running version 19.17.

Since I am running with dom0/domU, my base machine (from where I will call most of the patches) is the dom0. There, I have ssh passwordless/keyless to all other cells, dbnodes, domU, and switches.

Before you start the patch please check the readme for the patch and identify if you have everything in compliance. Do not start any patch if you meet the requirements. Even from a simple database version, grid, and switch versions. And as well, do not start the patch if your machine has HW errors. So, please read the note Exadata System Software 23.1.0.0.0 Update (32829291) (Doc ID 2772585.1).