When we are enrolling a database at ZDLRA we need to configure the RMAN channel parameters to point to our definitions like RA_WALLET and CREDENTIAL_ALIAS. The same is done for backup and restore channels. But this can be done in a different way using RA_CLIENT_CONFIG_FILE for ra_library.

Category Archives: Backup

MOVE_BACKUP and ZDLRA

As I wrote previously, sometimes we need to have long-term/archival backups due to some compliance. And usually, these backups are stores outside (like a vault/bunker) but for sure not at the same datacenter as the database. But how we can do this at ZDLRA?

In my post about COPY_BACKUP, I wrote how to have an external copy of one backup set at ZDLRA. But this is not the best option when we need to archive some backup because it continues to follow the same recovery window as the original backup set. This means that if you need to have some kind of archive for 5 years, you need to define your recovery window (at the policy level) to this window. And for sure this will put high pressure on space usage because all backups will be stored until became obsolete.

So, the best way is to use the KEEP backups from rman. And as I wrote in my previous post, they not interact/broke with the incremental forever strategy. Is possible to generate the keep backup, and using the DBMS_RA.MOVE_BACKUP moves these backups to a filesystem destination (and further you can copy/store) and archive it outside of ZDLRA.

KEEP backups and ZDLRA

KEEP backups from rman are used to provide long-term and archival retention. They are used to bypass the default policy retention and are used to sustain some regulations/compliances (like HIPAA, or others) that require archival retention. But with ZDLRA they are treated in a different way. Here I will show how the KEEP backups can impact your backup strategy for ZDLRA.

COPY_BACKUP at ZDLRA

As you know, ZDLRA is one appliance dedicated to provides you zero data loss in several (planned and unplanned) outages. All the backups are stored inside of the delta store to be processed, and they are deconstructed, meaning that the rman backup set does not exist (as is the formal backup set file).

But sometimes we need to copy/extract some backups outside of ZDLRA and copy it to the filesystem. Maybe because some regulations/compliances need to store for long-term/archival purposes. But some caveats are important to be explained.

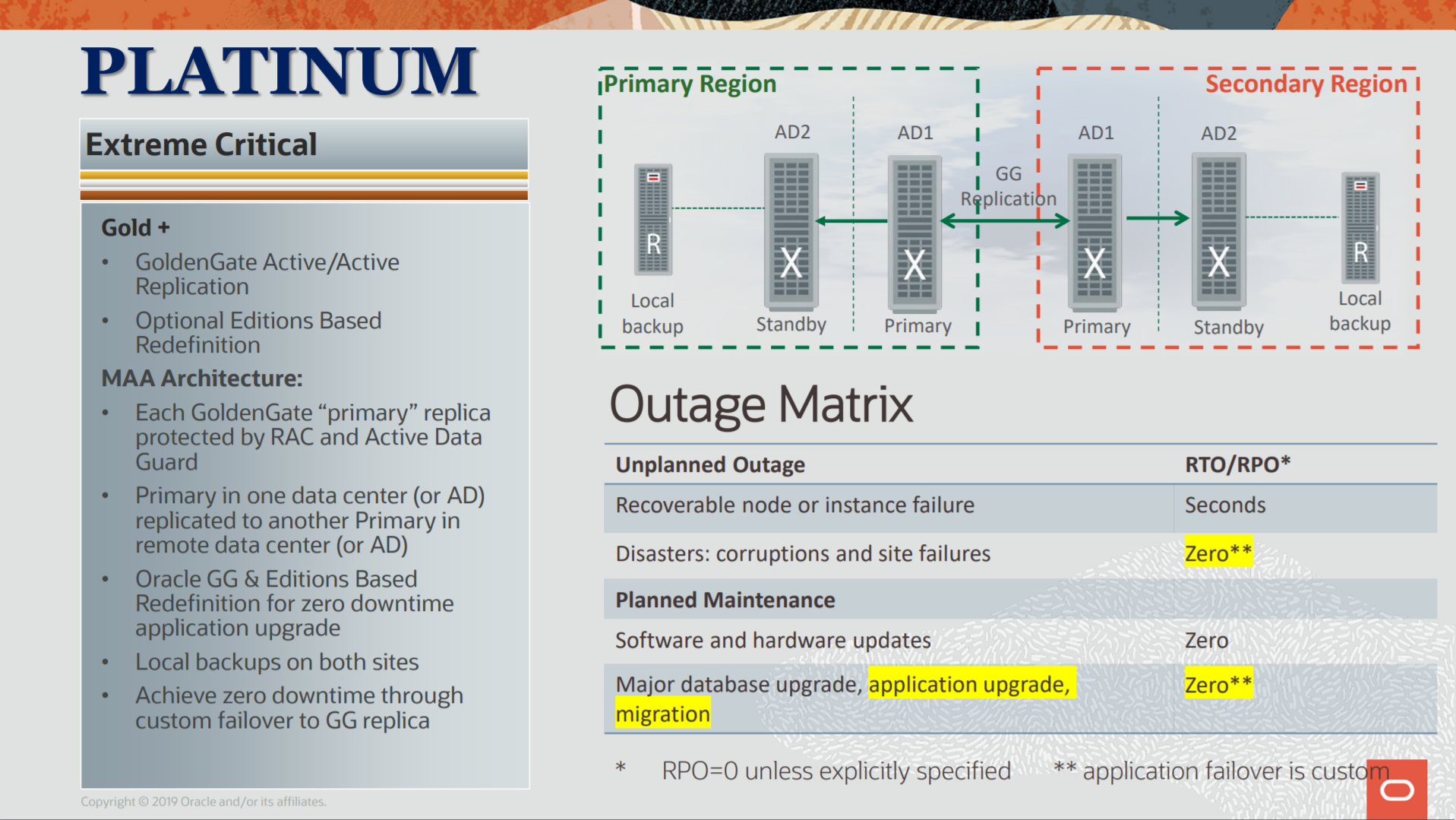

ZDLRA + MAA, Protection for Platinum Architecture

The Platinum architecture is the last defined at MAA references and is the highest level of protection that you can achieve for MAA. It goes beyond the Gold protection (that I explained in my previous post) and you can have application continuity even version upgrade for your database.

The image above was taken from https://www.oracle.com/a/tech/docs/maa-overview-onpremise-2019.pdf

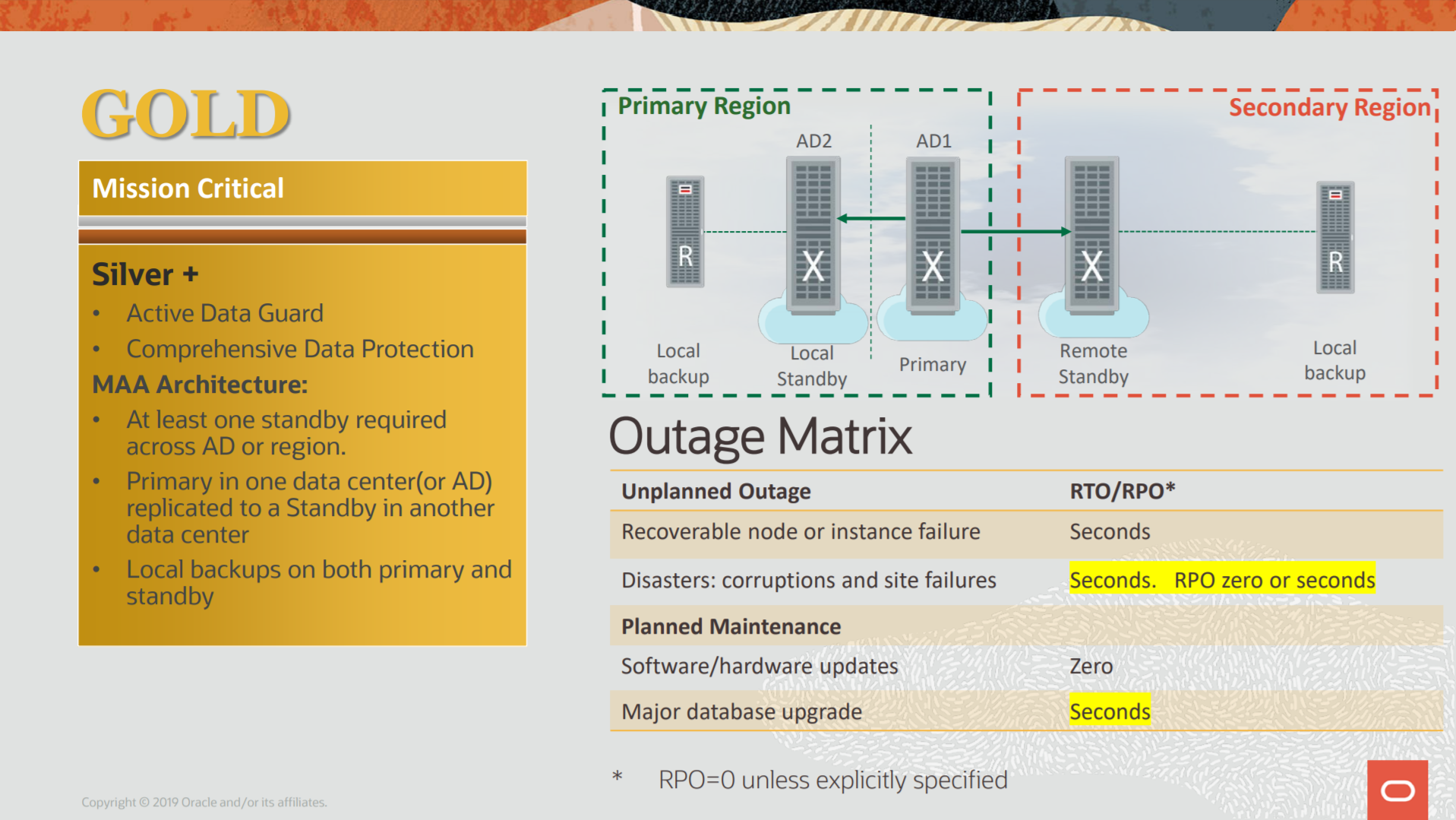

ZDLRA + MAA, Protection for Gold Architecture

The Gold architecture for MAA is used to emphasis the application continuity. All the possible outages (planned or no) are protected by Oracle features. Here we are one step further and start to design using multi-site architecture. Data Guard, RAC, Oracle Clusterware, everything is there. But even with these, ZDLRA is still needed to allow complete protection.

The image above taken from https://www.oracle.com/a/tech/docs/maa-overview-onpremise-2019.pdf.

With the MAA references, we have the blueprints and highlights how to protect them since the standalone/single instance until the multiple site database. But for Gold we are beyond RPO and RTO, they are important but application continuity and data continuity join to complete the whole picture.

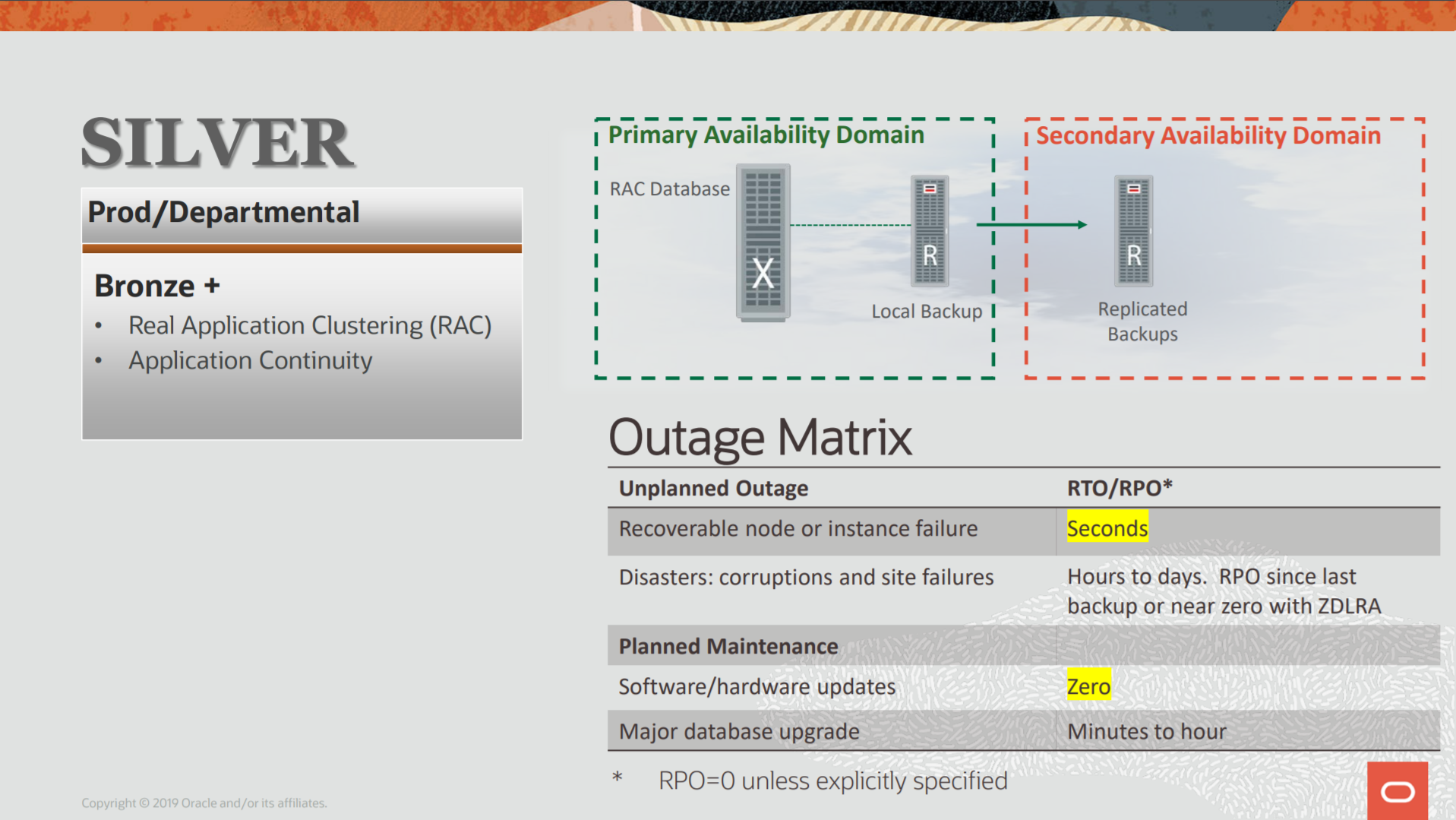

ZDLRA + MAA, Protection for Silver Architecture

The MAA defined Silver architecture for database environments that use (or need) high availability to survive for outages. The idea is having more than one single instance running, and to do that, it relies on Oracle Clusterware and Engineered Systems to mitigate the single point of failure. But is not just a database that gains with this, the Silver architecture is the first step to have application continuity. And again, ZDLRA is there since the beginning.

As you can see above, the Silver by MAA blueprints improves compared with Bronze architecture that I spoke at the last post. But the basic points are there: RPO and RTO. They continue to base rule here. And the goals are the same: Data Availability, Data Protection, Performance (no impact), Cost (lower cost), and Risk (reduce). More technical details here at the MAA Overview doc.

As you can see above, the Silver by MAA blueprints improves compared with Bronze architecture that I spoke at the last post. But the basic points are there: RPO and RTO. They continue to base rule here. And the goals are the same: Data Availability, Data Protection, Performance (no impact), Cost (lower cost), and Risk (reduce). More technical details here at the MAA Overview doc.

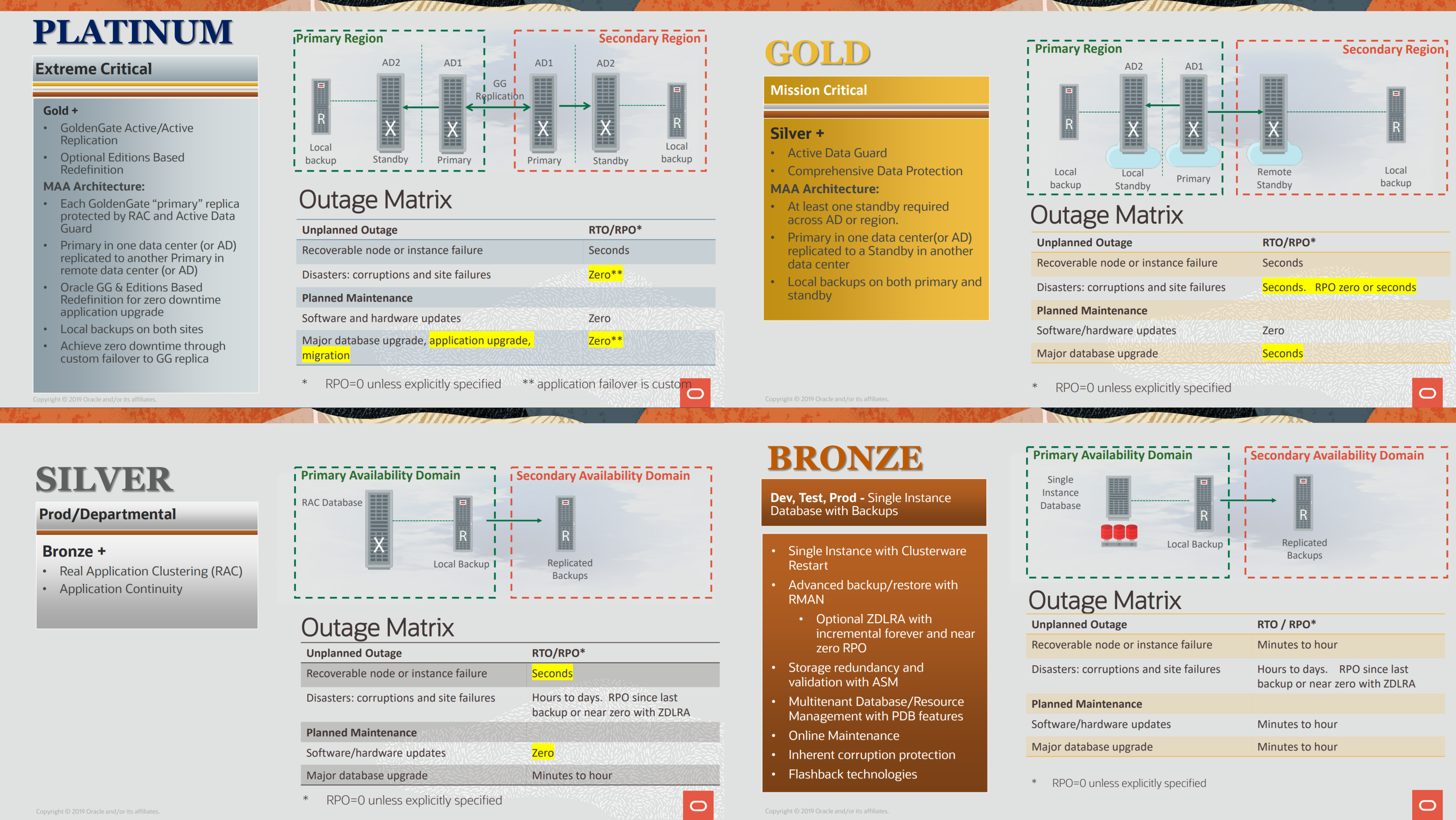

ZDLRA + MAA, Protection for Bronze Architecture

Oracle Maximum Availability Architecture (MAA) means more than just Data Guard or Golden Gate to survive outages, is related to data protection, data availability, and application continuity. MAA defines four reference architectures that can be used to guide during the deploy/design of your environment, and ZDLRA is there for all architectures.

Image above taken from https://www.oracle.com/a/tech/docs/maa-overview-onpremise-2019.pdf.

Image above taken from https://www.oracle.com/a/tech/docs/maa-overview-onpremise-2019.pdf.

With the MAA references, we have the blueprints and highlights how to protect them since the standalone/single instance until the multiple site database. The MAA goal is to survive an outage but also sustain: Data Availability, Data Protection, Performance (no impact), Cost (lower cost), and Risk (reduce).

ASM, Mount Restricted Force For Recovery

Survive to disk failures it is crucial to avoid data corruption, but sometimes, even with redundancy at ASM, multiple failures can happen. Check in this post how to use the undocumented feature “mount restricted force for recovery” to resurrect diskgroup and lose less data when multiple failures occur.

Diskgroup redundancy is a key factor for ASM resilience, where you can survive to disk failures and still continue to run databases. I will not extend about ASM disk redundancy here, but usually, you can configure your diskgroup without redundancy (EXTERNAL), double redundancy (NORMAL), triple redundancy (HIGH), and even fourth redundancy (EXTEND for stretch clusters).

If you want to understand more about redundancy you have a lot of articles at MOS and on the internet that provide useful information. One good is this. The idea is simple, spread multiple copies in different disks. And can even be better if you group disks in the same failgroups, so, your data will have multiple copies in separate places.

As an example, this a key for Exadata, where every storage cell is one independent failgroup and you can survive to one entire cell failure (or double full, depending on the redundancy of your diskgroup) without data loss. The same idea can be applied at a “normal” environment, where you can create failgroup to disks attached to controller A, and another attached to controller B (so the failure of one storage controller does not affect all failgroups). At ASM, if you do not create failgroup, each disk is a different one in diskgroups that have redundancy enabled.

ZDLRA, Dataguard, Archivelogs, and RMAN-08137

When configuring a database with Real-Time Redo at ZDLRA it is important to check the deletion policy for archivelog. This is even more important when the database is protected with dataguard. I already wrote about Real-time Redo in this previous post, and when using with dataguard in another post.

But sometimes (during maintenance as an example) you can face the error RMAN-08137: warning: archived log not deleted, needed for standby or upstream capture process if the deletion policy of archivelog is not aligned with your needs.