Since the 21c was public available the Data Guard per Pluggable Database – DG PDB – was intended to be there, but Oracle needed more time to make things work and some weeks ago released the feature with the 21.7 version. Here in this post, I will show to configure it and also how to troubleshoot, and the pitfalls of using it. As usual, all the steps, logs, and outputs are covered here and I hope that it helps you understand the whole DG PDB process.

My environment

The environment that I am using here is:

- Two databases running in RAC mode (two nodes in each cluster).

- ASM: same DATA and RECO diskgroups names in each cluster.

About the databases I have:

- ORADBDC1, that have the pdb PDBDC1. So, they represent the DC1.

- ORADBDC2, that have the pdb PDBDC2. So, they represent the DC2.

Each of these clusters is in a separate environment, this means that both are primary databases inside each datacenter. So, they have no DG configured between them.

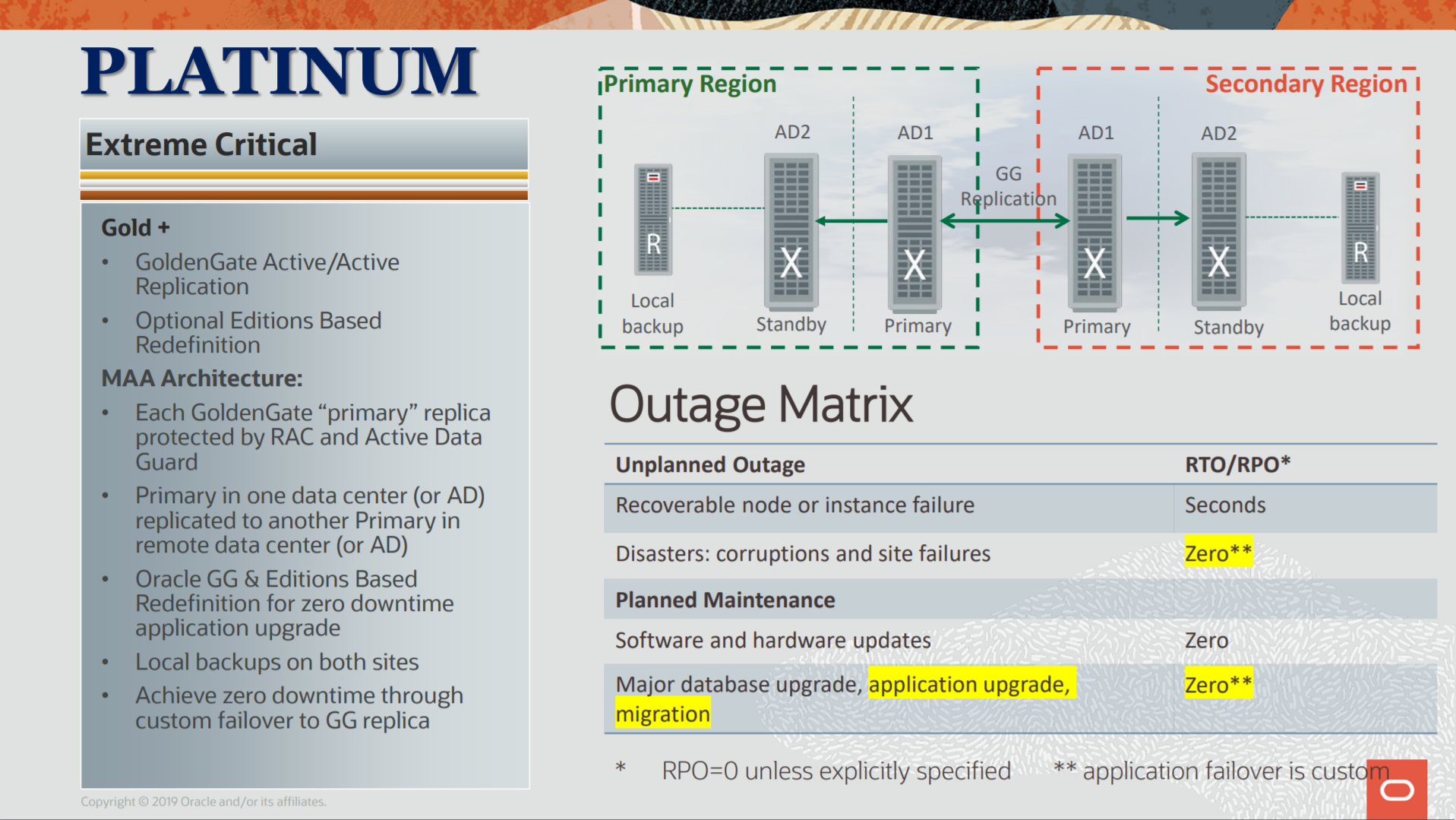

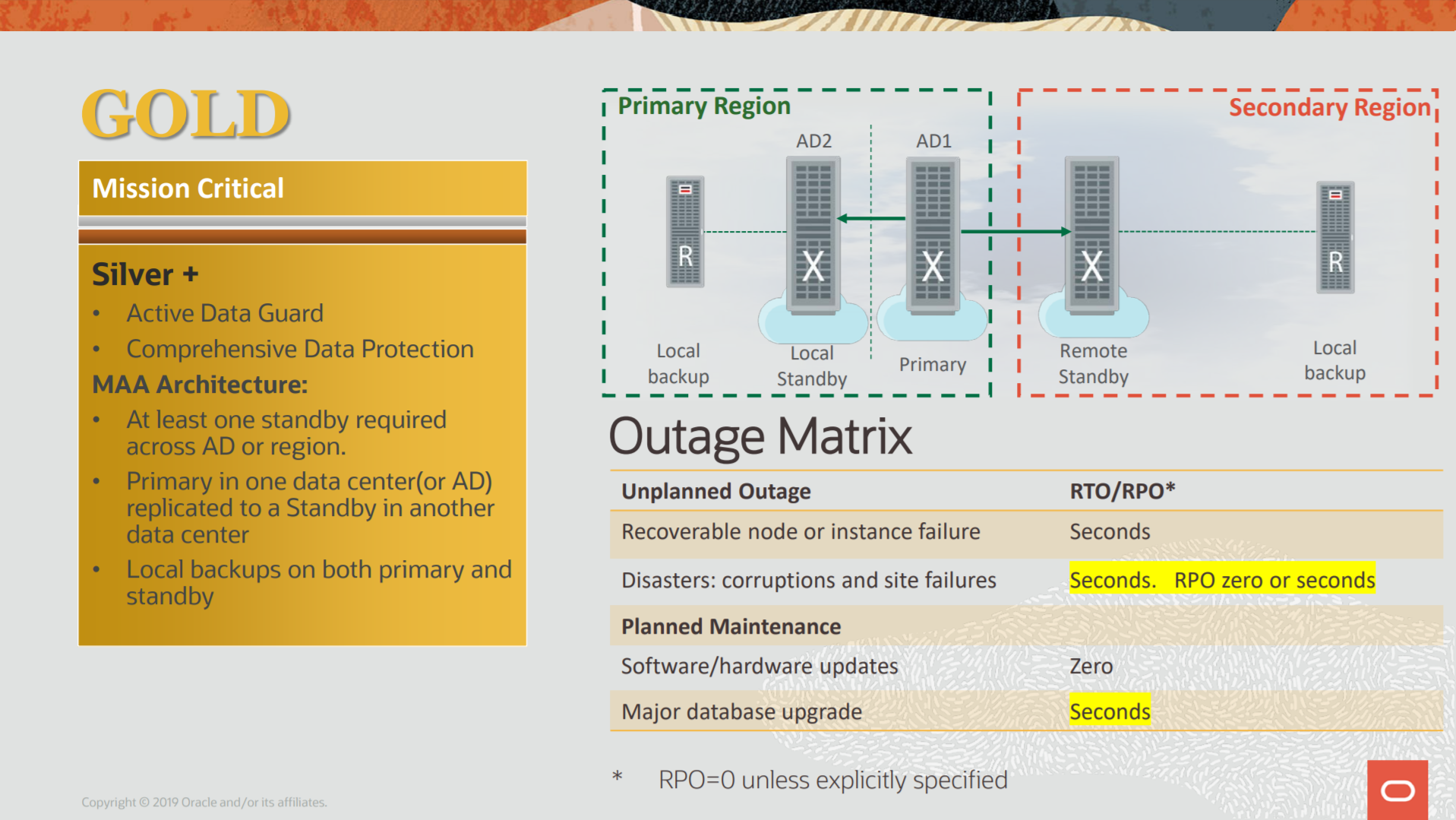

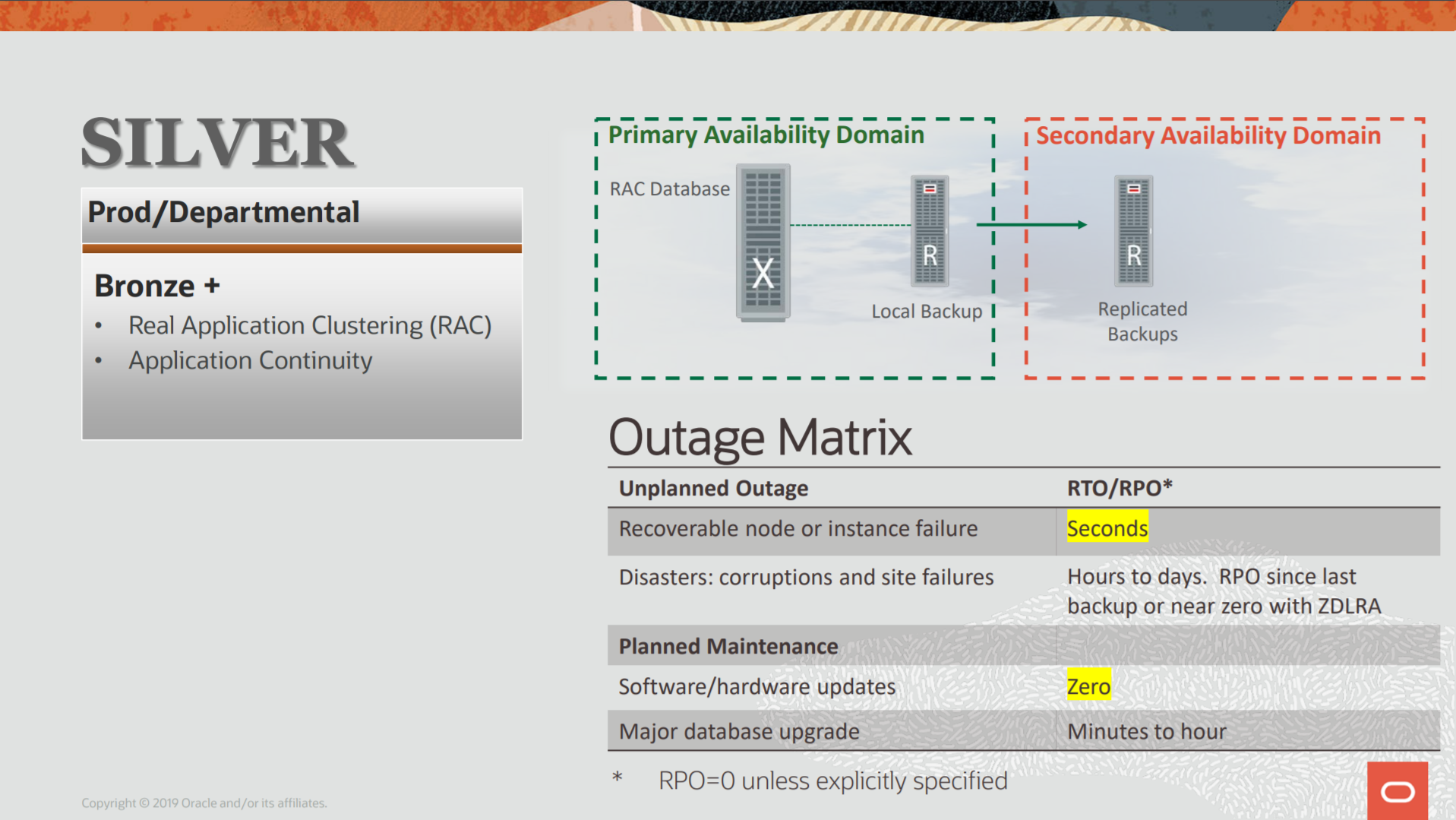

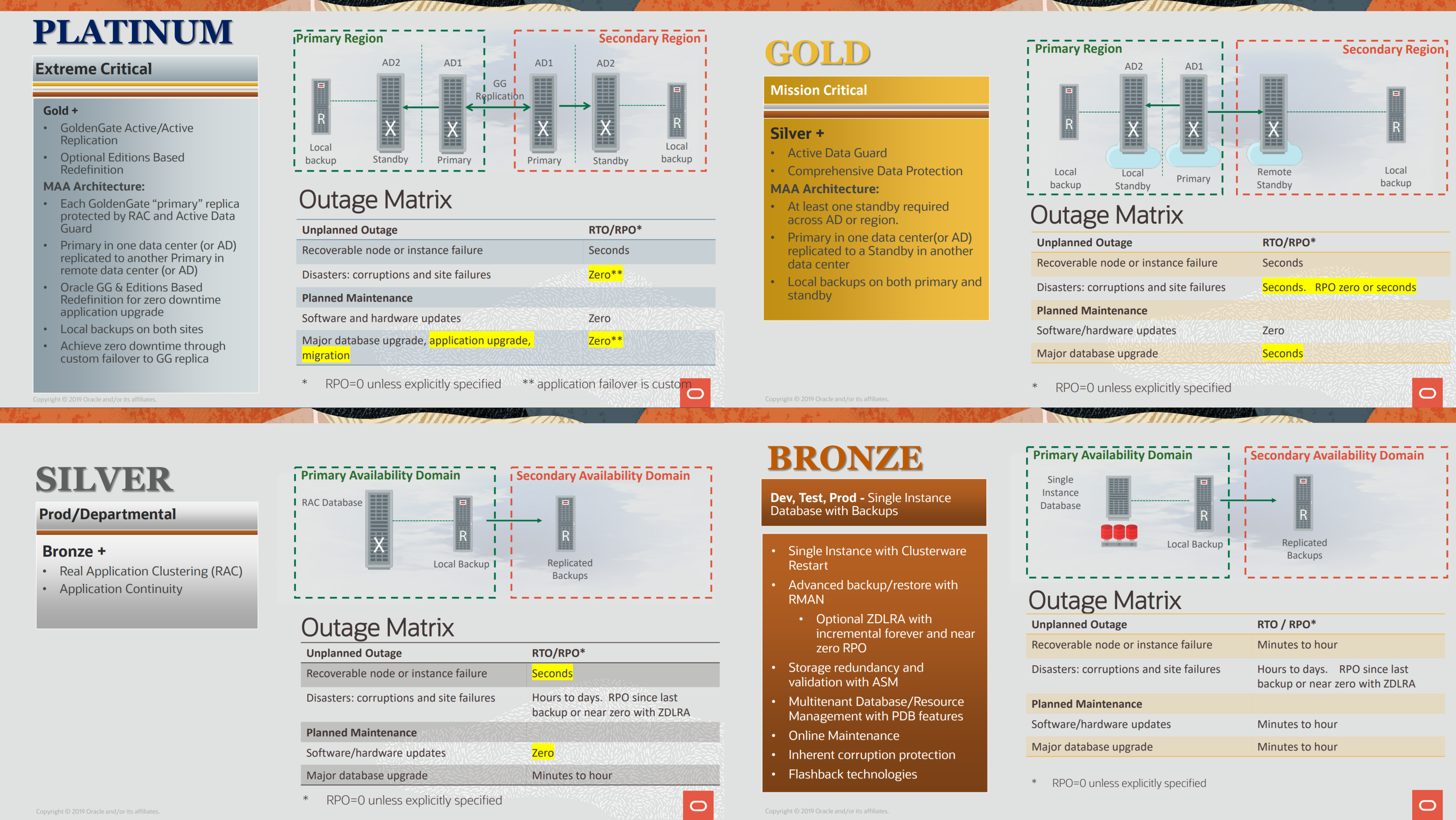

The main target for this post is to have the pdb from DC2 protected by the ORADBDC1 at the DC1. I used RAC and ASM because this is usually the normal configuration for the MAA (following the recommended architectures baseline) when using DG. This increases the protection and reduces the SPOF of your environment.

DG PDB

The idea of DG PDB differs a little from what we see commonly for Data Guard, here each container have own life. This means that only the pdb is protected and not the entire cdb. This puts the DG PDB close to Cloud than On-Prem because it fit perfectly at the OCI structure since you can create your pdb in one region and choose another region to protect it. And even closer if you think for Autonomous Database that your ownership is pdb only. I will not say that is good or not, but is linked to how Oracle works with OCI. Personally, I prefer to have normal DG configured to protect my databases and I choose where I want to open my pdb (maybe they add this feature in the future).

Another detail is that DG PDG (from now) works only in MaxPerformance mode, so, there is no SYNC mode for the archive destinations. There are more limitations for the DG PDB and you can check it in the topic DG PDB Configuration Restrictions from official documentation (I recommend that you read it).

Please read my new blog post about the new changes to the process. You can see how the process evolved and it is better. Read it here.

Click here to read more…